v1.0 of presentation.

3

docs/Makefile

Normal file

@@ -0,0 +1,3 @@

|

||||

paper.pdf: paper.tex

|

||||

pdflatex $^ -o $@

|

||||

evince $@

|

||||

BIN

docs/figures/allsides_request.png

Normal file

|

After Width: | Height: | Size: 47 KiB |

BIN

docs/figures/articles_per_year.png

Normal file

|

After Width: | Height: | Size: 22 KiB |

BIN

docs/figures/common_tld.png

Normal file

|

After Width: | Height: | Size: 24 KiB |

BIN

docs/figures/distinct_publishers.png

Normal file

|

After Width: | Height: | Size: 20 KiB |

BIN

docs/figures/link_cluster_elbow.png

Normal file

|

After Width: | Height: | Size: 33 KiB |

BIN

docs/figures/link_pca_clusters_links.png

Normal file

|

After Width: | Height: | Size: 29 KiB |

BIN

docs/figures/link_pca_clusters_normalized.png

Normal file

|

After Width: | Height: | Size: 48 KiB |

BIN

docs/figures/link_pca_clusters_onehot.png

Normal file

|

After Width: | Height: | Size: 61 KiB |

BIN

docs/figures/pca_with_classes.png

Normal file

|

After Width: | Height: | Size: 51 KiB |

BIN

docs/figures/stories_per_publisher.png

Normal file

|

After Width: | Height: | Size: 22 KiB |

BIN

docs/figures/top_publishers.png

Normal file

|

After Width: | Height: | Size: 54 KiB |

BIN

docs/paper.pdf

Normal file

61

docs/paper.tex

Normal file

@@ -0,0 +1,61 @@

|

||||

\documentclass{article}

|

||||

\usepackage{multicol}

|

||||

\usepackage{hyperref}

|

||||

\title{Data Mining CS 571}

|

||||

\author{Matt Jensen}

|

||||

\date{2023-04-25}

|

||||

|

||||

\begin{document}

|

||||

\maketitle

|

||||

|

||||

\section*{Abstract}

|

||||

|

||||

News organizations have been repeatedly accused of being partisan.

|

||||

Additionally, they have been accused of polarizing dicussion to drive up revenue and engagement.

|

||||

This paper seeks to quantify those claims by classifying the degree to which news headlines have become more emotionally charged of time.

|

||||

A secondary goal is the investigate whether news organization have been uniformly polarized, or if one pole has been 'moving' more rapidly away from the 'middle'.

|

||||

This analysis will probe to what degree has the \href{https://en.wikipedia.org/wiki/Overton_window}{Overton Window} has shifted in the media.

|

||||

Naom Chomsky had a hypothesis about manufactured consent that is beyond the scope of this paper, so we will restrict our analysis to the presence of agenda instead of the cause of it.

|

||||

|

||||

\begin{multicols}{2}

|

||||

|

||||

\section{Data Preparation}

|

||||

The subject of analysis is a set of news article headlines scraped from the news aggregation site \href{https://mememorandum.com}{Memeorandum} for news stories from 2006 to 2022.

|

||||

Each news article has a title, author, description, publisher, publish date, url and related discussions.

|

||||

The site also has a concept of references, where a main, popular story may be covered by other sources.

|

||||

This link association might be used to support one or more of the hypothesis of the main analysis.

|

||||

After scraping the site, the data will need to be deduplicated and normalized to minimize storage costs and processing errors.

|

||||

What remains after these cleaning steps is approximitely 6,400 days of material, 300,000 distinct headlines from 21,000 publishers and 34,000 authors used in the study.

|

||||

|

||||

\section{Missing Data Policy}

|

||||

|

||||

The largest data policy that will have to be dealt with is news organizations that share the same parent company, but might have slightly different names.

|

||||

Wall Street Journal news is drastically different than their opinion section.

|

||||

Other organizations have slightly different names for the same thing and a product of the aggregation service and not due to any real difference.

|

||||

Luckily, most of the anaylsis is operating on the content of the news headlines, which do not suffer from this data impurity.

|

||||

|

||||

\section{Classification Task}

|

||||

|

||||

The classification of news titles into emotional categories was accomplished by using a pretrained large langauge model from \href{https://huggingface.co/arpanghoshal/EmoRoBERTa}{HuggingFace}.

|

||||

This model was trained on \href{https://ai.googleblog.com/2021/10/goemotions-dataset-for-fine-grained.html}{a dataset curated and published by Google} which manually classified a collection of 58,000 comments into 28 emotions.

|

||||

The classes for each article will be derived by tokenizing the title and running the model over the tokens, then grabbing the largest probabilty class from the output.

|

||||

|

||||

The data has been discretized into years.

|

||||

Additionally, the publishers will have been discretized based of either principle component analysis on link similarity or based on the bias ratings of \href{https://www.allsides.com/media-bias/ratings}{All Sides}.

|

||||

Given that the features of the dataset are sparse, it is not expected to have any useless attributes, unless the original hypothesis of a temporal trend proving to be false.

|

||||

Of the features used in the analysis, there are enough data points that null or missing values can safely be excluded.

|

||||

|

||||

\section{Experiments}

|

||||

|

||||

No computational experiment have been done yet.

|

||||

Generating the tokenized text, the word embedding and the emotional sentiment analysis have made up the bulk of the work thus far.

|

||||

The bias ratings do not cover all publisher in the dataset, so the number of articles without a bias rating from their publisher will have to be calculated.

|

||||

If it is less than 30\% of the articles, it might not make sense to use the bias ratings.

|

||||

The creation and reduction of the link graph with principle component analysis will need to be done to visualize the relationship between related publishers.

|

||||

|

||||

\section{Results}

|

||||

\textbf{TODO.}

|

||||

|

||||

\end{multicols}

|

||||

|

||||

\end{document}

|

||||

552

docs/presentation.md

Normal file

@@ -0,0 +1,552 @@

|

||||

_model: slides

|

||||

---

|

||||

|

||||

title: CSCI 577 - Data Mining

|

||||

|

||||

---

|

||||

body:

|

||||

|

||||

# Political Polarization

|

||||

|

||||

Matt Jensen

|

||||

|

||||

===

|

||||

|

||||

# Hypothesis

|

||||

|

||||

Political polarization is rising, and news articles are a proxy measure.

|

||||

|

||||

==

|

||||

|

||||

# Is this reasonable?

|

||||

|

||||

|

||||

==

|

||||

|

||||

# Why is polarization rising?

|

||||

|

||||

Not my job, but there's research<sup>[ref](#references)</sup> to support it

|

||||

|

||||

|

||||

==

|

||||

|

||||

# Sub-hypothesis

|

||||

|

||||

- The polarization increases near elections. <!-- .element: class="fragment" -->

|

||||

- The polarization is not evenly distributed across publishers. <!-- .element: class="fragment" -->

|

||||

- The polarization is not evenly distributed across political specturm. <!-- .element: class="fragment" -->

|

||||

|

||||

==

|

||||

|

||||

# Sub-sub-hypothesis

|

||||

|

||||

- Similarly polarized publishers link to each other. <!-- .element: class="fragment" -->

|

||||

- 'Mainstream' media uses more neutral titles. <!-- .element: class="fragment" -->

|

||||

- Highly polarized publications don't last as long. <!-- .element: class="fragment" -->

|

||||

|

||||

===

|

||||

|

||||

# Data Source(s)

|

||||

|

||||

memeorandum.com <!-- .element: class="fragment" -->

|

||||

|

||||

allsides.com <!-- .element: class="fragment" -->

|

||||

|

||||

huggingface.com <!-- .element: class="fragment" -->

|

||||

|

||||

===

|

||||

|

||||

<section data-background-iframe="https://www.memeorandum.com" data-background-interactive></section>

|

||||

|

||||

===

|

||||

|

||||

# memeorandum.com

|

||||

|

||||

- News aggregation site. <!-- .element: class="fragment" -->

|

||||

- Was really famous before Google News. <!-- .element: class="fragment" -->

|

||||

- Still aggregates sites today. <!-- .element: class="fragment" -->

|

||||

|

||||

==

|

||||

|

||||

# Why Memeorandum?

|

||||

|

||||

- Behavioral: I only read titles sometimes. (doom scrolling). <!-- .element class="fragment" -->

|

||||

- Behavioral: It's my source of news (with sister site TechMeme.com). <!-- .element class="fragment" -->

|

||||

- Convenient: most publishers block bots. <!-- .element class="fragment" -->

|

||||

- Convenient: dead simple html to parse. <!-- .element class="fragment" -->

|

||||

- Archival: all headlines from 2006 forward. <!-- .element class="fragment" -->

|

||||

- Archival: automated, not editorialized. <!-- .element class="fragment" -->

|

||||

|

||||

===

|

||||

|

||||

<section data-background-iframe="https://www.allsides.com/media-bias/ratings" data-background-interactive></section>

|

||||

|

||||

===

|

||||

|

||||

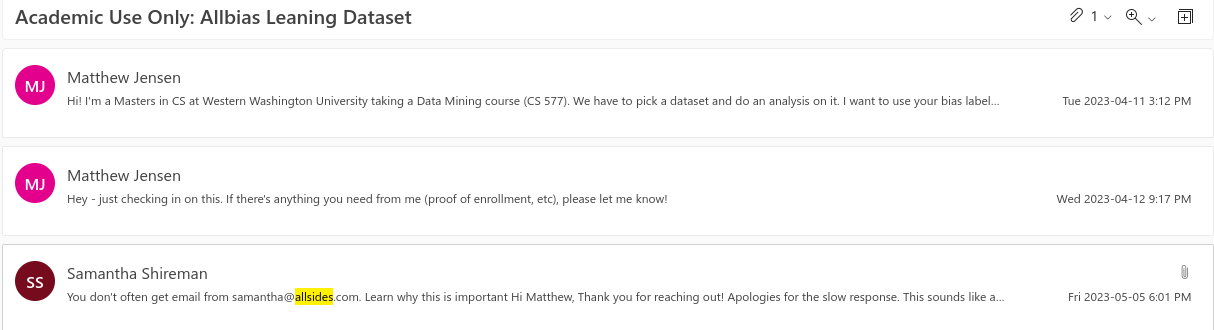

# AllSides.com

|

||||

|

||||

- Rates news publications as left, center or right. <!-- .element: class="fragment" -->

|

||||

- Ratings combine: <!-- .element: class="fragment" -->

|

||||

- blind bias surveys.

|

||||

- editorial reviews.

|

||||

- third party research.

|

||||

- community voting.

|

||||

- Originally scraped website, but direct access eventually. <!-- .element: class="fragment" -->

|

||||

|

||||

|

||||

==

|

||||

|

||||

# Why AllSides?

|

||||

|

||||

- Behavioral: One of the first google results on bias apis. <!-- .element class="fragment" -->

|

||||

- Convenient: Ordinal ratings [-2: very left, 2: very right]. <!-- .element class="fragment" -->

|

||||

- Convenient: Easy format. <!-- .element class="fragment" -->

|

||||

- Archival: Covers 1400 publishers. <!-- .element class="fragment" -->

|

||||

|

||||

===

|

||||

|

||||

<section data-background-iframe="https://huggingface.co/models" data-background-interactive></section>

|

||||

|

||||

===

|

||||

|

||||

# HuggingFace.com

|

||||

|

||||

- Deep Learning library. <!-- .element: class="fragment" -->

|

||||

- Lots of pretrained models. <!-- .element: class="fragment" -->

|

||||

- Easy, off the shelf word/sentence embeddings and text classification models. <!-- .element: class="fragment" -->

|

||||

|

||||

==

|

||||

|

||||

# Why HuggingFace?

|

||||

|

||||

- Behavioral: Language Models are HOT right now. <!-- .element: class="fragment" -->

|

||||

- Behavioral: The dataset needed more features.<!-- .element: class="fragment" -->

|

||||

- Convenient: Literally 5 lines of python.<!-- .element: class="fragment" -->

|

||||

- Convenient: Testing different model performance was easy.<!-- .element: class="fragment" -->

|

||||

- Archival: Lots of pretrained classification tasks.<!-- .element: class="fragment" -->

|

||||

|

||||

===

|

||||

|

||||

# Data Structures

|

||||

Stories

|

||||

|

||||

- Top level stories. <!-- .element: class="fragment" -->

|

||||

- title.

|

||||

- publisher.

|

||||

- author.

|

||||

- Related discussion. <!-- .element: class="fragment" -->

|

||||

- publisher.

|

||||

- uses 'parent' story as a source.

|

||||

- Stream of stories (changes constantly). <!-- .element: class="fragment" -->

|

||||

|

||||

==

|

||||

|

||||

# Data Structures

|

||||

Bias

|

||||

|

||||

- Per publisher. <!-- .element: class="fragment" -->

|

||||

- name.

|

||||

- label.

|

||||

- agree/disagree vote by community.

|

||||

- Name could be semi-automatically joined to stories. <!-- .element: class="fragment" -->

|

||||

|

||||

==

|

||||

|

||||

# Data Structures

|

||||

Embeddings

|

||||

|

||||

- Per story title. <!-- .element: class="fragment" -->

|

||||

- sentence embedding (n, 384).

|

||||

- sentiment classification (n, 1).

|

||||

- emotional classification (n, 1).

|

||||

- ~ 1 hour of inference time to map story titles and descriptions. <!-- .element: class="fragment" -->

|

||||

|

||||

===

|

||||

|

||||

# Data Collection

|

||||

|

||||

==

|

||||

|

||||

# Data Collection

|

||||

|

||||

Story Scraper (simplified)

|

||||

|

||||

```python

|

||||

day = timedelta(days=1)

|

||||

cur = date(2005, 10, 1)

|

||||

end = date.today()

|

||||

while cur <= end:

|

||||

cur = cur + day

|

||||

save_as = output_dir / f"{cur.strftime('%y-%m-%d')}.html"

|

||||

url = f"https://www.memeorandum.com/{cur.strftime('%y%m%d')}/h2000"

|

||||

r = requests.get(url)

|

||||

with open(save_as, 'w') as f:

|

||||

f.write(r.text)

|

||||

```

|

||||

|

||||

==

|

||||

|

||||

# Data Collection

|

||||

Bias Scraper (hard)

|

||||

|

||||

```python

|

||||

...

|

||||

bias_html = DATA_DIR / 'allsides.html'

|

||||

parser = etree.HTMLParser()

|

||||

tree = etree.parse(str(bias_html), parser)

|

||||

root = tree.getroot()

|

||||

rows = root.xpath('//table[contains(@class,"views-table")]/tbody/tr')

|

||||

|

||||

ratings = []

|

||||

for row in rows:

|

||||

rating = dict()

|

||||

...

|

||||

```

|

||||

|

||||

==

|

||||

|

||||

# Data Collection

|

||||

Bias Scraper (easy)

|

||||

|

||||

|

||||

|

||||

==

|

||||

|

||||

# Data Collection

|

||||

Embeddings (easy)

|

||||

|

||||

```python

|

||||

# table = ...

|

||||

tokenizer = AutoTokenizer.from_pretrained("roberta-base")

|

||||

model = AutoModel.from_pretrained("roberta-base")

|

||||

|

||||

for chunk in table:

|

||||

tokens = tokenizer(chunk, add_special_tokens = True, truncation = True, padding = "max_length", max_length=92, return_attention_mask = True, return_tensors = "pt")

|

||||

outputs = model(**tokens)

|

||||

embeddings = outputs.last_hidden_state.detach().numpy()

|

||||

...

|

||||

```

|

||||

|

||||

==

|

||||

|

||||

# Data Collection

|

||||

Classification Embeddings (medium)

|

||||

|

||||

```python

|

||||

...

|

||||

outputs = model(**tokens)[0].detach().numpy()

|

||||

scores = 1 / (1 + np.exp(-outputs)) # Sigmoid

|

||||

class_ids = np.argmax(scores, axis=1)

|

||||

for i, class_id in enumerate(class_ids):

|

||||

results.append({"story_id": ids[i], "label" : model.config.id2label[class_id]})

|

||||

...

|

||||

```

|

||||

|

||||

===

|

||||

|

||||

# Data Selection

|

||||

|

||||

==

|

||||

|

||||

# Data Selection

|

||||

Stories

|

||||

|

||||

- Clip the first and last full year of stories. <!-- .element: class="fragment" -->

|

||||

- Remove duplicate stories (big stories span multiple days). <!-- .element: class="fragment" -->

|

||||

|

||||

==

|

||||

# Data Selection

|

||||

|

||||

Publishers

|

||||

|

||||

- Combine subdomains of stories. <!-- .element: class="fragment" -->

|

||||

- blog.washingtonpost.com and washingtonpost.com are considered the same publisher.

|

||||

- This could be bad. For example: opinion.wsj.com != wsj.com.

|

||||

|

||||

==

|

||||

|

||||

# Data Selection

|

||||

|

||||

Links

|

||||

|

||||

- Select only stories with publishers whose story had been a 'parent' ('original publishers'). <!-- .element: class="fragment" -->

|

||||

- Eliminates small blogs and non-original news.

|

||||

- Eliminate publishers without links to original publishers. <!-- .element: class="fragment" -->

|

||||

- Eliminate silo'ed publications.

|

||||

- Link matrix is square and low'ish dimensional.

|

||||

|

||||

==

|

||||

|

||||

# Data Selection

|

||||

|

||||

Bias

|

||||

|

||||

- Keep all ratings, even ones with low agree/disagree ratio.

|

||||

- Join datasets on publisher name.

|

||||

- Not automatic (look up Named Entity Recognition). <!-- .element: class="fragment" -->

|

||||

- Started with 'jaro winkler similarity' then manually from there.

|

||||

- Use numeric values

|

||||

- [left: -2, left-center: -1, ...]

|

||||

|

||||

===

|

||||

|

||||

# Descriptive Stats

|

||||

|

||||

Raw

|

||||

|

||||

| metric | value |

|

||||

|:------------------|--------:|

|

||||

| total stories | 299714 |

|

||||

| total related | 960111 |

|

||||

| publishers | 7031 |

|

||||

| authors | 34346 |

|

||||

| max year | 2023 |

|

||||

| min year | 2005 |

|

||||

| top level domains | 7063 |

|

||||

|

||||

==

|

||||

# Descriptive Stats

|

||||

|

||||

Stories Per Publisher

|

||||

|

||||

|

||||

|

||||

==

|

||||

|

||||

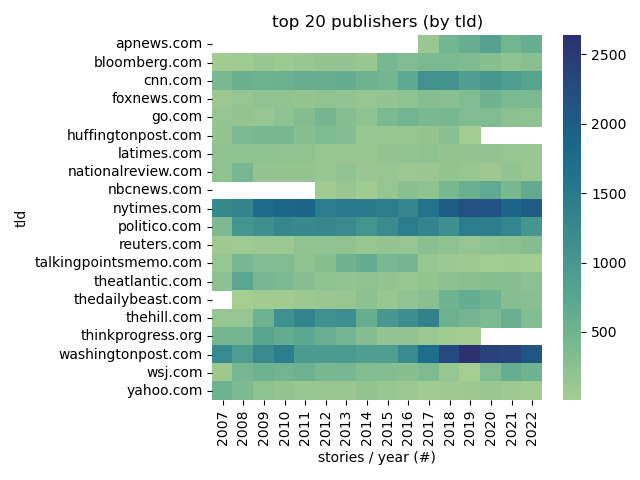

# Descriptive Stats

|

||||

|

||||

Top Publishers

|

||||

|

||||

|

||||

|

||||

==

|

||||

|

||||

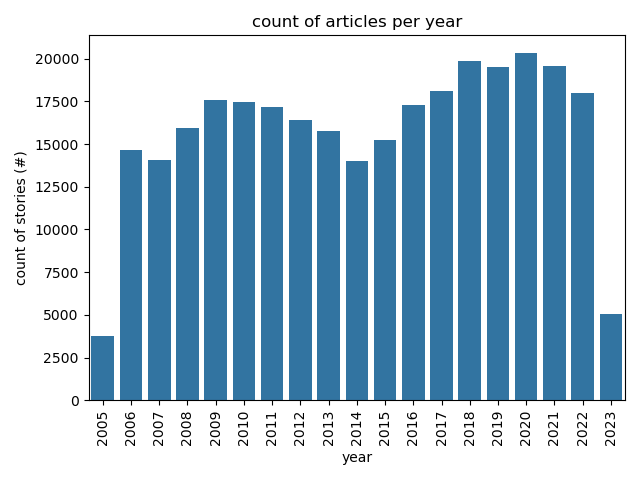

# Descriptive Stats

|

||||

|

||||

Articles Per Year

|

||||

|

||||

|

||||

|

||||

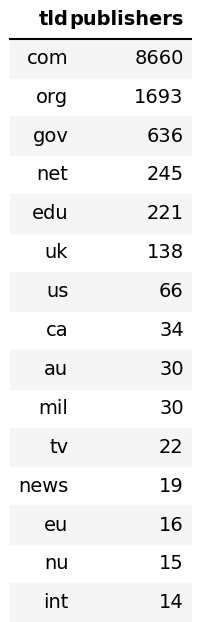

==

|

||||

|

||||

# Descriptive Stats

|

||||

|

||||

Common TLDs

|

||||

|

||||

|

||||

|

||||

==

|

||||

|

||||

# Descriptive Stats

|

||||

|

||||

Post Process

|

||||

|

||||

| key | value |

|

||||

|:------------------|--------:|

|

||||

| total stories | 251553 |

|

||||

| total related | 815183 |

|

||||

| publishers | 223 |

|

||||

| authors | 23809 |

|

||||

| max year | 2022 |

|

||||

| min year | 2006 |

|

||||

| top level domains | 234 |

|

||||

|

||||

===

|

||||

# Experiments

|

||||

|

||||

1. **clustering** on link similarity. <!-- .element: class="fragment" -->

|

||||

2. **classification** on link similarity. <!-- .element: class="fragment" -->

|

||||

3. **classification** on sentence embedding. <!-- .element: class="fragment" -->

|

||||

4. **classification** on sentiment analysis. <!-- .element: class="fragment" -->

|

||||

5. **regression** on emotional classification over time and publication. <!-- .element: class="fragment" -->

|

||||

|

||||

===

|

||||

# Experiment 1

|

||||

|

||||

Setup

|

||||

|

||||

- Create one-hot encoding of links between publishers. <!-- .element: class="fragment" -->

|

||||

- Cluster the encoding. <!-- .element: class="fragment" -->

|

||||

- Expect similar publications in same cluster. <!-- .element: class="fragment" -->

|

||||

- Use PCA to visualize clusters. <!-- .element: class="fragment" -->

|

||||

|

||||

Note:

|

||||

Principle Component Analysis:

|

||||

- a statistical technique for reducing the dimensionality of a dataset.

|

||||

- linear transformation into a new coordinate system where (most of) the variation data can be described with fewer dimensions than the initial data.

|

||||

|

||||

==

|

||||

|

||||

# Experiment 1

|

||||

|

||||

One Hot Encoding

|

||||

|

||||

| publisher | nytimes| wsj| newsweek| ...|

|

||||

|:----------|--------:|----:|--------:|----:|

|

||||

| nytimes | 1| 1| 1| ...|

|

||||

| wsj | 1| 1| 0| ...|

|

||||

| newsweek | 0| 0| 1| ...|

|

||||

| ... | ...| ...| ...| ...|

|

||||

|

||||

==

|

||||

|

||||

# Experiment 1

|

||||

|

||||

n-Hot Encoding

|

||||

|

||||

| publisher | nytimes| wsj| newsweek| ...|

|

||||

|:----------|--------:|----:|--------:|----:|

|

||||

| nytimes | 11| 1| 141| ...|

|

||||

| wsj | 1| 31| 0| ...|

|

||||

| newsweek | 0| 0| 1| ...|

|

||||

| ... | ...| ...| ...| ...|

|

||||

|

||||

==

|

||||

|

||||

# Experiment 1

|

||||

|

||||

Normalized n-Hot Encoding

|

||||

|

||||

| publisher | nytimes| wsj| newsweek| ...|

|

||||

|:----------|--------:|----:|--------:|----:|

|

||||

| nytimes | 0| 0.4| 0.2| ...|

|

||||

| wsj | 0.2| 0| 0.4| ...|

|

||||

| newsweek | 0.0| 0.0| 0.0| ...|

|

||||

| ... | ...| ...| ...| ...|

|

||||

|

||||

==

|

||||

|

||||

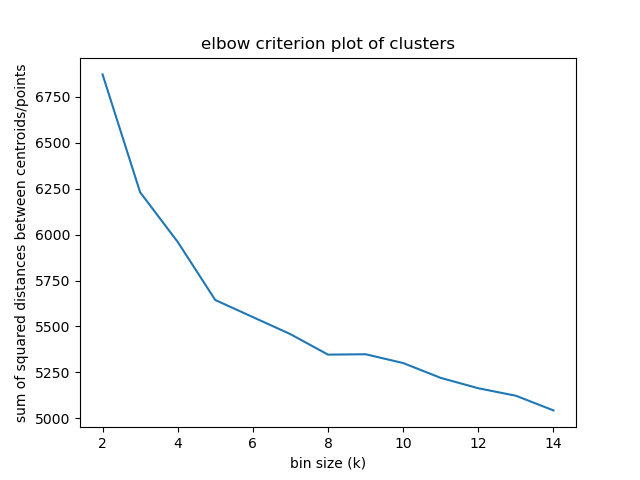

# Experiment 1

|

||||

|

||||

Elbow criterion

|

||||

|

||||

|

||||

|

||||

Note:

|

||||

|

||||

The elbow method looks at the percentage of explained variance as a function of the number of clusters:

|

||||

|

||||

One should choose a number of clusters so that adding another cluster doesn't give much better modeling of the data.

|

||||

|

||||

Percentage of variance explained is the ratio of the between-group variance to the total variance,

|

||||

|

||||

==

|

||||

|

||||

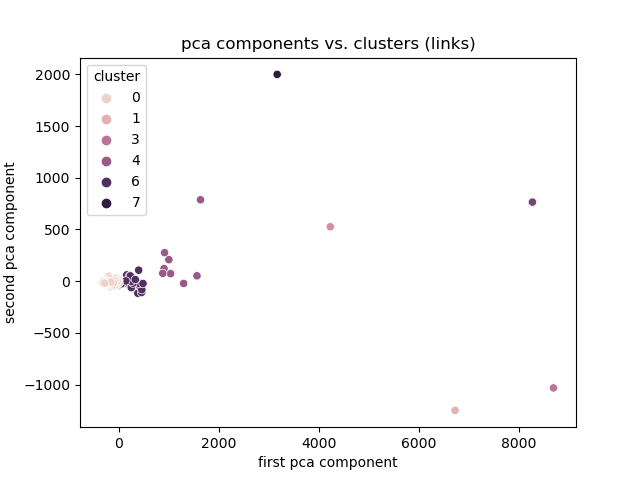

# Experiment 1

|

||||

|

||||

Link Magnitude

|

||||

|

||||

|

||||

|

||||

==

|

||||

|

||||

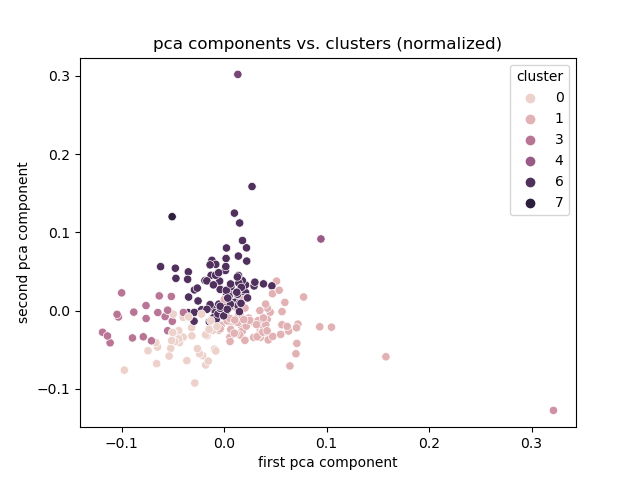

# Experiment 1

|

||||

|

||||

Normalized

|

||||

|

||||

|

||||

|

||||

==

|

||||

|

||||

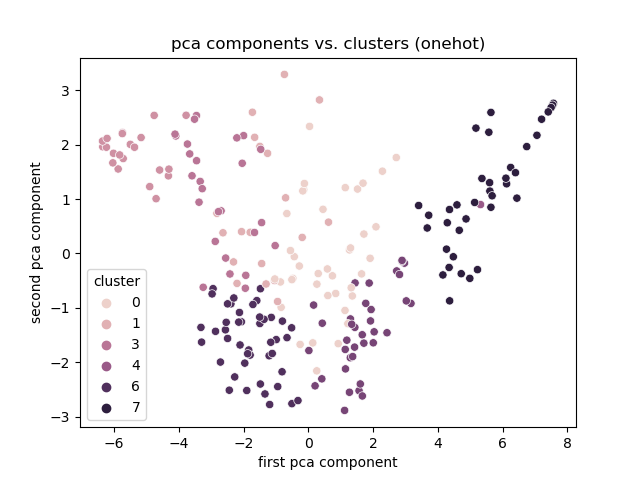

# Experiment 1

|

||||

|

||||

Onehot

|

||||

|

||||

|

||||

|

||||

==

|

||||

|

||||

# Experiment 1

|

||||

|

||||

Discussion

|

||||

|

||||

- Best encoding: One hot. <!-- .element: class="fragment" -->

|

||||

- Clusters based on total links otherwise.

|

||||

- Clusters, but no explanation

|

||||

- Limitation: need the link encoding to cluster.

|

||||

- Smaller publishers might not link very much.

|

||||

|

||||

===

|

||||

|

||||

# Experiment 2

|

||||

|

||||

Setup

|

||||

|

||||

- Create features. <!-- .element: class="fragment" -->:

|

||||

- Publisher frequency.

|

||||

- Reuse link encodings.

|

||||

- Create classes: <!-- .element: class="fragment" -->

|

||||

- Join bias classifications.

|

||||

- Train classifier. <!-- .element: class="fragment" -->

|

||||

|

||||

Note:

|

||||

|

||||

==

|

||||

# Experiment 2

|

||||

Descriptive stats

|

||||

|

||||

| metric | value |

|

||||

|:------------|:----------|

|

||||

| publishers | 1582 |

|

||||

| labels | 6 |

|

||||

| left | 482 |

|

||||

| center | 711 |

|

||||

| right | 369 |

|

||||

| agree range | [0.0-1.0] |

|

||||

|

||||

==

|

||||

|

||||

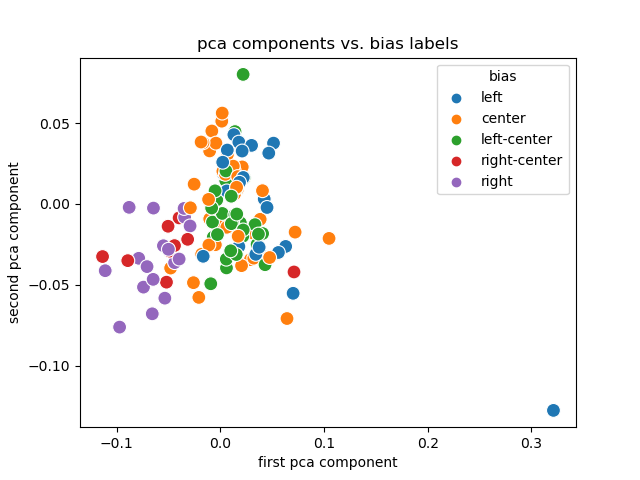

# Experiment 2

|

||||

|

||||

PCA + Labels

|

||||

|

||||

|

||||

|

||||

==

|

||||

|

||||

# Experiment 2

|

||||

|

||||

Discussion

|

||||

|

||||

- Link encodings (and their PCA) are useful. <!-- .element: class="fragment" -->

|

||||

- Labels are (sort of) separated and clustered.

|

||||

- Creating them for smaller publishers is trivial.

|

||||

==

|

||||

|

||||

# Experiment 2

|

||||

|

||||

Limitations

|

||||

|

||||

- Dependent on accurate rating. <!-- .element: class="fragment" -->

|

||||

- Ordinal ratings not available. <!-- .element: class="fragment" -->

|

||||

- Dependent on accurate joining across datasets. <!-- .element: class="fragment" -->

|

||||

- Entire publication is rated, not authors. <!-- .element: class="fragment" -->

|

||||

- Don't know what to do with community rating. <!-- .element: class="fragment" -->

|

||||

|

||||

===

|

||||

|

||||

# Experiment 3

|

||||

|

||||

Setup

|

||||

|

||||

==

|

||||

|

||||

# Limitations

|

||||

|

||||

- Many different authors under the same publisher. <!-- .element: class="fragment" -->

|

||||

- Publishers use syndication. <!-- .element: class="fragment" -->

|

||||

- Bias ratings are biased. <!-- .element: class="fragment" -->

|

||||

|

||||

===

|

||||

|

||||

# Questions

|

||||

|

||||

===

|

||||

|

||||

<!-- .section: id="references" -->

|

||||

|

||||

# References

|

||||

|

||||

[1]: Stewart, A.J. et al. 2020. Polarization under rising inequality and economic decline. Science Advances. 6, 50 (Dec. 2020), eabd4201. DOI:https://doi.org/10.1126/sciadv.abd4201.

|

||||

|

||||

Note:

|

||||